Shipping my first open-source release

Table of Contents

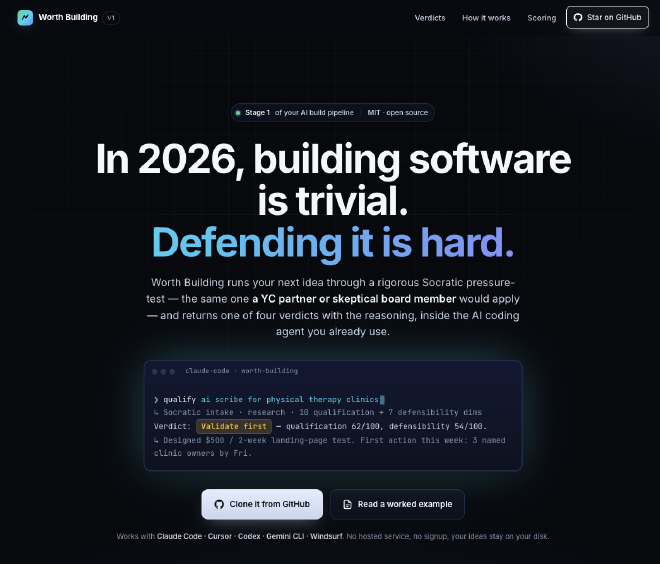

30 minutes now · 3 months saved. That’s the job Worth Building does. You bring an idea you’re tempted to commit a quarter of your life to; it pressure-tests that idea inside the AI coding agent you already use and returns one of four verdicts (build, validate, park, or no-go) with reasoning sharp enough to argue with.

I’ve always felt a quiet debt to the open-source world.

Every strategy deck I’ve shipped, every architecture I’ve drawn on a whiteboard, every proof-of-concept that found its way into a client’s roadmap. None of it would exist without decades of free, generous work by people I’ll never meet. You don’t stay in this field for twenty years without that being true.

But until now, I had never actually given anything back.

Not a pull request. Not a tiny fix to a typo in a README. Nothing. I had every excuse: not enough time, not the right idea, not sure anyone would care. The usual self-talk that keeps good intentions quietly unshipped.

What I finally published #

The project is called Worth Building. It’s an AI-native framework that qualifies a business idea before you commit three months of your life to it.

You bring an idea. It runs a rigorous Socratic pressure-test, the same one a YC partner or skeptical board member would apply, inside the AI coding agent you already use (Claude Code, Cursor, Codex, Gemini, Windsurf). You get back one of four verdicts with explicit reasoning:

- Build now: real wedge, defensible moat, team that can actually win

- Validate first: promising, but one assumption needs a cheap experiment first (the tool designs the experiment for you)

- Park: interesting, but the conditions aren’t there yet

- No-go: structural flaw; save yourself the quarter

Every verdict closes with one concrete action you can take this week. Not “validate demand.” Not “think about X.” Something specific, named, and doable before Friday.

Under the hood it scores 10 qualification dimensions and 7 defensibility dimensions, each with written reasoning and cited sources. It defaults to skepticism, which is the opposite of the cheerful agreement you get from most AI tools when you pitch them an idea.

Why this particular problem #

Most of my professional life has been spent watching organisations spend money (sometimes a lot of it) on software initiatives that a rigorous qualification conversation would have parked, validated, or killed before the first line of code. My research on digital transformation for SMEs is about the same failure mode from the other side: teams with less slack, fewer specialist resources, and less room for expensive mistakes, committing to the wrong thing for lack of a cheap way to pressure-test it.

And then there’s the audience I most identify with: developers and indie builders pouring evenings and weekends into a side project, investing the only hours they truly own (sometimes for months, sometimes for years) before discovering the idea never had a wedge in the first place. The cost there isn’t measured in budget. It’s measured in evenings that should have gone to family, sleep, or the next idea that would have actually shipped.

In 2026, building software is trivial. AI has made shipping a working prototype almost costless.

Deciding what is actually worth building is the skill that’s quietly become the bottleneck.

Worth Building is the tool I wish I’d had the first five times I watched a team commit a quarter to an idea that a thirty-minute conversation could have saved.

The scary part of publishing #

Releasing something open source means exposing your thinking to public scrutiny. Not the polished version you’d put in a client deck. The actual reasoning, the opinionated scoring thresholds, the moat categories you chose to weight over the ones you didn’t. People will disagree with every dimension. Some will disagree loudly.

That is, genuinely, the point. The whole reason to make it public rather than keep it as a private consulting tool is that the sharp disagreements are where the framework gets better. A rubric that nobody pushes back on is usually a rubric that nobody uses.

If you read it and think I’ve weighted something wrong, open an issue. If you run a verdict and it aged badly, open an issue. Especially that one.

Gratitude, belatedly paid #

Four other Claude Code projects did serious work I openly mined from: Claude-Business-Evaluator by Daniel Rosehill, project-idea-validator by VoltAgent, startup-skills by Breno Werneck, and show-me-the-money by iamzifei. Their work is called out in the repo’s NOTICE.md, and several of the sharpest ideas in Worth Building (the 6-persona council with blind peer ranking, the anti-sycophancy default, the Riskiest Assumption Test format, the Problem Deconstruction Funnel) are theirs first. I adapted, I didn’t invent.

More broadly: thank you to the maintainers of every piece of software I’ve used without saying thanks for twenty years. You know who you are. Or you don’t, and that’s sort of the point.

Come kick the tires #

The repo lives at github.com/kommuniker/worth-building and the landing page is at worthbuilding.dev. MIT license. Works with any AI coding agent that reads AGENTS.md.

If you’re about to commit a quarter to an idea, spend thirty minutes running qualify on it first. If the tool disagrees with you, good. That conversation is the point.

And if you find something sharp to push back on, that first issue from a stranger is the one I’m waiting for. It’s what “open source” finally starts to mean.